@40hz: Ah! Sorry. I think I see what you mean now.

I had originally thought that the Wayback machine might be a permanent one-way archive, but then I realised that quite a lot of stuff never seemed to make it into the archive in the first place, and then I later discovered that was probably because the rules set up in the robots.txt file (or something) were things that robots/crawlers would

have to obey (apparently not because of any statutory obligation, but because of a "professional" obligation). Later, when I was researching a "bad science" investigative website that had shut down because of legal threats from lawyers acting for some of the bad/fraudulent scientists whom it apparently exposed, I discovered that, though most of the website was in the archive, the offending parts of it had apparently been expunged.

What that seemed to indicate was that, not only was

http://wayback.archive.org/ a necessarily passive robot custodian of what website owners wanted to permit its crawlers to access by default,

but also that they would action any and all subsequent requests for expunging archived content.I could be wrong in some of the above, because I have deduced a lot of it from experience. I don't know it for a verifiable fact.

What I was unaware of until reading the posts I linked to, was that putting a rule into a website's robots.txt or something, to block the Archive from crawling that website, could/would necessarily be used to force a retrospective expunging of all previous archived material from that website.

My new awareness on this point means that I have just turned from being a strong supporter of Archive.org to being an indifferent non-supporter.I mean, what's the point? Humanity's creative drive to monetize everything has led to an entirely new and dominant market for ubiquitous e-commerce that has been created and developed in the www. However, according to the Pareto principle, roughly 80% of website content is likely to be puerile rubbish and 20% of it useful facts/truth. The www has moved from the original state of being a repository of, and a communications medium for scientific truth/research, to the point where facts/truth would seem to have become victims of a wave of absurdity/irrationality. For example, you only need to look at the evidence of irrationality/absurdity in comments and information posted in the Science/Peer Review and Thermageddon subject threads in the DC Forum.

Sure, it's great to be able to have a sort of discussion - e.g., like in this particular discussion forum thread I am posting to now - with different people somewhere else in the world whom you've never met and might never meet. That at least is something we couldn't so easily do in pre-Internet times; but is the quality of the discussion really any better than if you were face-to-face? Is there less absurdity/irrationality or more? Take it to an extreme and ask the same questions of Twitter. There is a stochastic tendency in Nature for things to "regress to the mean" (e.g., with IQs). On the Internet, it seems to be regression to mediocrity/orthodoxy of content (e.g., as in Wikipedia).

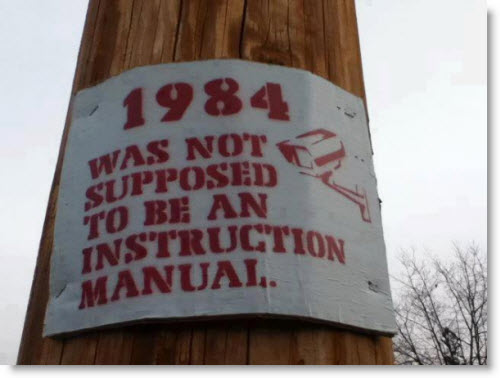

The reality that "...putting a rule into a website's robots.txt or something, to block the Archive from crawling that website, could/would necessarily be used to force a retrospective expunging of all previous archived material from that website" means that truth (history)

will be and is already being deliberately expunged/manipulated, as in the 1984 scenario.

Which I guess is the point you were making.

“He who controls the past controls the future. He who controls the present controls the past.”

“But if thought corrupts language, language can also corrupt thought.”

“Until they became conscious they will never rebel, and until after they have rebelled they cannot become conscious.”

― George Orwell, 1984